Blog

Insights

How to Detect Impersonation in Remote Exams

Learn how to detect impersonation in remote exams with layered identity checks, liveness, continuous authentication, behavioral signals, and device integrity controls.

Nurali Sarbakysh

CEO

I have reviewed thousands of remote exam sessions across education and government programs. The same pattern repeats every year. When stakeholders lose trust, it is rarely because someone looked away. Trust collapses when the wrong person takes the exam. Impersonation is identity fraud, not a behavior issue, and it needs a different approach than traditional online proctoring. In this guide I explain how impersonation happens, how to detect it with evidence, and how to implement controls without creating a privacy or operational disaster.

Impersonation in remote exams is any case where a registered candidate does not complete the assessment themselves. It includes full substitution, mid-exam swap, or assisted proxy testing where someone off-camera drives the answers. In high-stakes exams, hired test takers and collusion networks are common. In recent years we have also seen synthetic identity attempts. These include virtual webcams, video injection, replay attack loops, and deepfake overlays. That is why a one-time ID check at login is not enough.

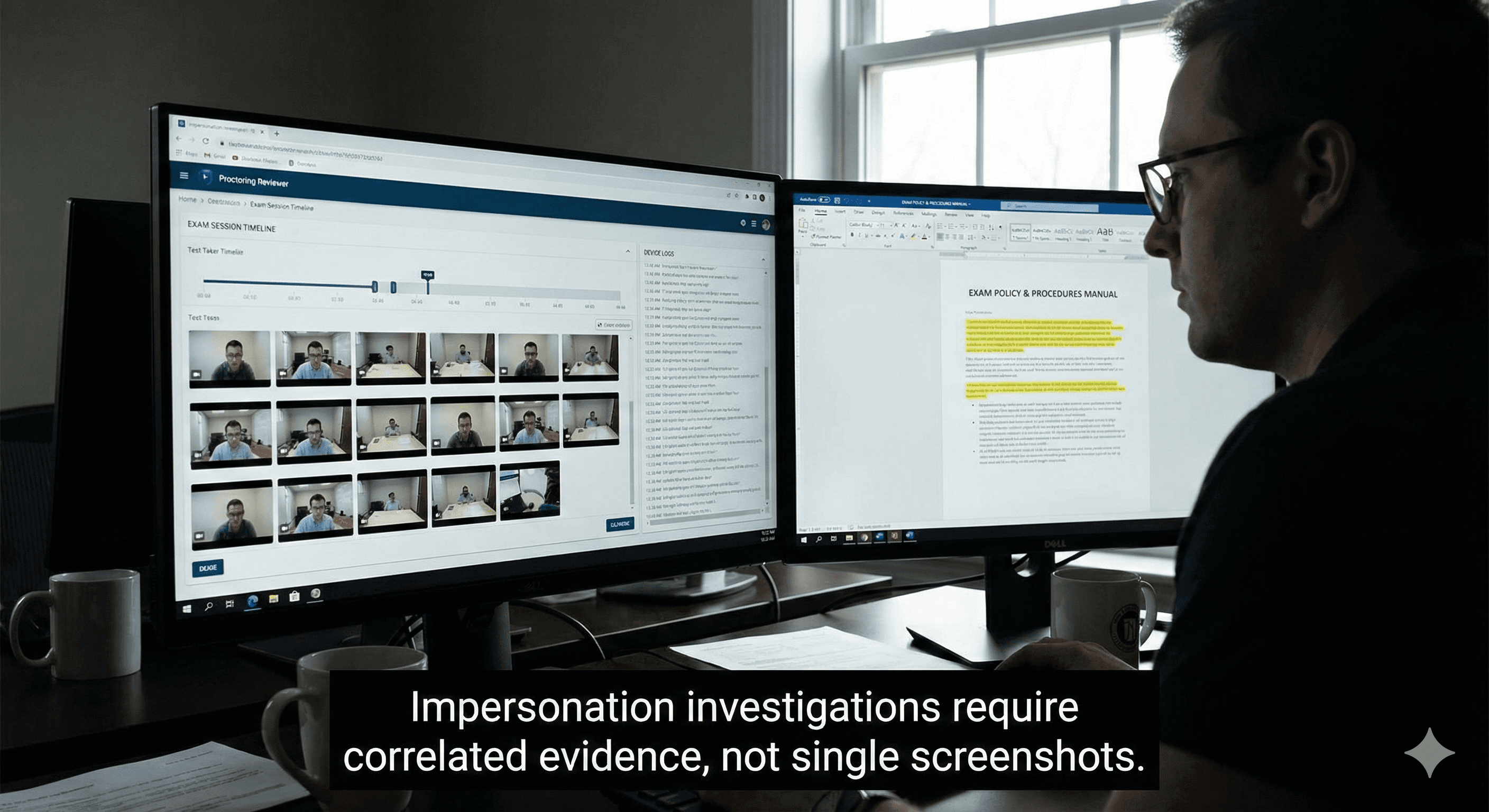

The most important shift is this. Impersonation detection must be layered. A single signal is never reliable. A secure and defensible decision comes from correlated evidence across identity verification, liveness detection, continuous authentication, behavior analytics, and device integrity. A good system does not just flag suspicion. It produces an evidence packet that supports review, appeals, and audits.

Why impersonation is hard to catch remotely

In a physical test center, identity is reinforced by presence. In remote exam security, identity becomes a continuous variable. The camera is a narrow sensor. Lighting changes, bandwidth drops, and candidates move. At the same time, attackers use tools designed to look normal. If the institution relies only on a webcam feed, it will miss the bypass path. The bypass path is usually device level. Virtual machine use, remote access tools, or a virtual camera can replace what the proctor sees.

Another reason impersonation is hard to catch is governance. Even when a system detects anomalies, organizations often lack clear thresholds and a reviewer workflow. That increases false accusations, creates appeals pressure, and erodes trust further. Detection without policy creates conflict.

Expert Tip

"Do not treat impersonation as a single event. Treat it as a continuity problem. The question is simple: did identity remain stable from start to finish?"

The evidence ladder - weak signals vs strong proof

Most teams start by watching behavior. That creates weak indicators: gaze changes, head turns, or nervous movement. These can support a case but rarely prove impersonation. Strong evidence comes from identity discontinuity, confirmed by multiple sources. For example, face continuity breaks after the session starts, the device fingerprint changes, and response timing shifts sharply. Another strong pattern is face absence followed by a sudden step change in typing cadence and answer accuracy.

This is why risk scoring works when it aggregates signals. One anomaly triggers review. Correlated anomalies build a case.

Layer 1 - Identity verification before the exam

Start with identity proofing. Capture a government ID, then capture a live selfie. Run face match and basic document checks. This step blocks casual substitution and sets a baseline for later comparison. For repeat test takers, enrollment strengthens accuracy. Enrollment means the system builds a reference profile from prior verified sessions.

Identity verification should be designed for the exam type. Schools and olympiad organizers need low friction. Government agencies and certification programs need higher assurance. The key is transparency. Candidates should know what data is collected and why.

If your program needs practical identity verification with audit-ready records, the TrustExam.ai online proctoring platform supports ID capture, face match workflows, and evidence logging that fits regulated environments. Learn how it works in the TrustExam.ai online proctoring platform: https://trustexam.ai

Layer 2 - Liveness detection and anti-spoofing

Liveness detection protects against presentation attacks. It helps detect photo spoofing and basic replay attempts. In proctoring contexts, it matters because attackers often try to feed the system a static image or prerecorded loop.

Liveness does not solve everything. A high-quality video injection can still look live. That is why liveness must be combined with device integrity. Still, liveness is an essential layer because it raises the effort required for spoofing and provides a measurable control aligned with security guidance such as ISO and NIST discussions on presentation attack detection.

Layer 3 - Continuous authentication during the session

This is the layer most institutions underestimate. Continuous authentication means the session continuously checks that the same person remains present. It includes face presence detection, face continuity checks, and re-authentication triggers when conditions change. Triggers can include extended face absence, multiple faces in frame, extreme lighting shifts, or suspicious device events.

The goal is not to interrupt candidates constantly. The goal is to prevent a mid-exam swap. A clean approach uses low-friction checks that activate only when risk increases.

Layer 4 - Behavioral biometrics and anomaly detection

Behavioral biometrics measure patterns like keystroke dynamics, typing cadence, and interaction telemetry. These signals matter because they are hard to replicate. If a proxy test taker takes over mid-exam, patterns often shift. Response-time anomalies can also indicate off-camera assistance. Long silent gaps followed by rapid correct answers are common in assisted proxy testing.

Behavior analytics should never auto-punish. It should trigger review and guide investigators to the right timeframe. That reduces reviewer workload and improves fairness.

Layer 5 - Device integrity and secure browser controls

A secure exam browser reduces the bypass surface. It can limit screen capture, block remote access tools, and prevent common cheating utilities. For impersonation specifically, device integrity checks matter. Virtual machine detection helps prevent candidates from running exams in controlled environments. Virtual camera detection helps prevent video injection and synthetic identity attempts. Device fingerprinting helps identify sudden changes that align with takeover behavior.

In regulated exams, this layer often determines whether the program is defensible. It is also one of the strongest differentiators between consumer-grade proctoring and enterprise exam integrity platforms.

Commercial insert

If your biggest concern is bypass through VM, remote desktop, or virtual webcams, TrustExam.ai focuses on multisignal detection across video, device, and behavior. That approach improves evidence quality for investigations and reduces dependence on live proctors. See AI online proctoring capabilities here: https://trustexam.ai/online-proctoring

Section 8. Human review, evidence packets, and appeals

Automation should flag risk. Humans should decide outcomes. The review model should be built into the program from day one. When an event is flagged, reviewers need an incident timeline with timecoded video snippets, key device events, and a short narrative of why the session is at risk. That is what I mean by an evidence packet. It reduces subjectivity and makes appeal handling consistent.

Appeals should be expected. Your policy should state what evidence is reviewed, who reviews it, and what outcomes are possible. This protects candidates and protects the institution.

Comparison table - impersonation detection methods

Method | Evidence strength | Cost | Scalability |

ID check at start | Medium | Low | High |

Face match + liveness | Medium-High | Medium | High |

Continuous face presence + re-check triggers | High | Medium | High |

Behavioral biometrics (keystroke dynamics, timing) | Medium | Medium | High |

Secure browser + VM detection | High | Medium | High |

Virtual camera detection | High | Medium | High |

Human live proctoring only | Medium | High | Low |

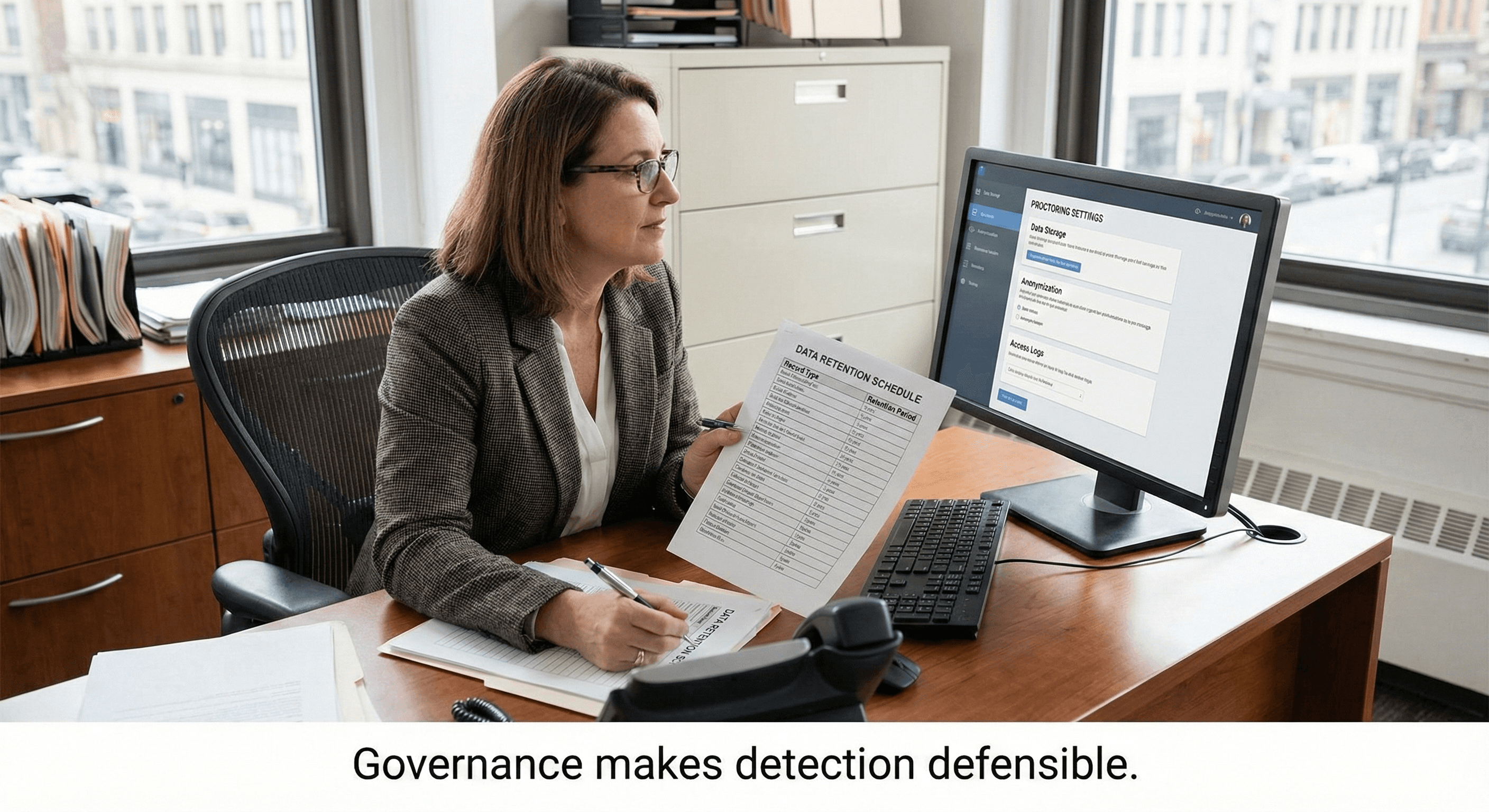

Privacy, transparency, retention, and fairness

Impersonation controls often involve biometric verification and monitoring. That requires governance. Programs should follow data minimization, limit retention schedule, and control access. Candidates should receive a clear consent notice that explains what is collected, why it is collected, and how long it is stored. Review decisions should be explainable. AI should not become the judge. It should become a risk triage tool.

For policy framing, many institutions reference their own academic integrity policies and align with guidance from organizations like NIST on digital identity concepts, as well as university exam integrity policies that set transparent expectations.

If you run government or national exams, you need auditability and procurement-grade controls. TrustExam.ai is used in regulated environments where evidence trails and scalability matter. Explore proctoring for government exams: https://trustexam.ai/who-we-help/government and proctoring for universities and schools: https://trustexam.ai/who-we-help/education

Conclusion

Impersonation in online exams is not solved by a single camera or a single rule. It is solved by layered identity assurance and correlated evidence. Start with identity verification and liveness. Add continuous authentication to prevent mid-exam swaps. Use behavioral biometrics to detect takeovers and collusion patterns. Harden the device layer with secure browser, VM detection, and virtual camera detection. Then make the program defensible with a reviewer workflow, evidence packets, appeals handling, and a clear retention schedule.

The institutions that succeed treat exam integrity as a system. That system protects credential value, reduces disputes, and supports scale without linear staffing growth.

FAQ

Checklist table - implementation steps for a 2-4 week pilot

Step | Owner | Deliverable |

Define impersonation scenarios and thresholds | Assessment lead + compliance | Written policy rules |

Select exam control level (light, standard, strict) | Program owner | Control matrix |

Configure identity verification and liveness | IT + vendor | Verified onboarding flow |

Enable secure browser and device integrity checks | IT/security | Device control profile |

Set continuous authentication triggers | Assessment + vendor | Trigger rules and exceptions |

Define reviewer workflow and evidence packet format | QA + operations | Review SOP and templates |

Run pilot with monitored sample cohort | Operations | Pilot report with KPIs |

Finalize retention schedule and access controls | Legal + security | Retention and access policy |

Is face recognition enough to detect impersonation?

No. Face match helps at login, but continuous authentication and device integrity are needed to stop mid-exam swaps and spoofing.

How do we reduce false positives in impersonation detection?

Use correlated signals, not single anomalies. Trigger human review. Define thresholds and an appeals process before launch.

Do we need liveness detection for every exam?

Not always. For low-stakes exams, it may be optional. For high-stakes exams, it is a practical baseline against spoofing.

What is the fastest way to start without disrupting operations?

Run a 2-4 week pilot with a clear control matrix, success KPIs, and a reviewer workflow. Keep triggers risk-based to protect user experience.

Nurali Sarbakysh

CEO

Share